AstraZeneca 2021 PSURs expose the low standards of vaccine safety regulations

And once you take a closer look at the free text entries, it is easy to see why CDC did not do ANY text mining of v-safe data

Summary:

MHRA (UK’s version of FDA) did not publish the initial AstraZeneca PSUR studies for 2021, and only did so after a FOIA request

The initial PSUR studies explain how AZ did their risk benefit calculations

We can use the numbers and map it to the v-safe data

Initial analysis suggests that v-safe would have already shown warning signs if the data had been properly analyzed in 2021

I also discuss the relationship between the background incidence rate and the risk window of analysis, and ask if the regulators are giving way too much leeway to Pharma companies to set long risk windows to make their product look safer

I think the CDC refused to publish any paper which did automatic text analysis of the v-safe free text information because it would have become too obvious that there was hardly any PII data in those entries

We can use the AESI background incidence rate paper cited in the AZ PSUR as the “official” data and use it for future v-safe calculations

I was recently alerted about the AstraZeneca PSUR data release on Twitter:

When you read the article, you see they mention something very odd (emphasis mine):

As the vaccine was rolled out, the UK’s version of the FDA, the Medicines and Healthcare Regulatory Agency, was receiving confidential safety reports from AstraZeneca twice per year. While later PSURs have been released, the earliest ones were withheld from public view. Through FOIA requests, ICAN’s attorneys have obtained these reports for December 2020–June 2021 and June 2021–December 2021.

When you think about it, that doesn’t make much sense at all, does it?

After all, at least when you consider the cumulative nature of safety monitoring, the later publications are usually continuations of the earlier publications.

But it does make sense if the earlier reports contain some fine-grained information which exposes the fact that a very low standard was used to clear the vaccine of any wrongdoing, and future publications only discuss it in summary form.

In other words, the earliest publications might have spelled out some specific calculations, while future publications can just proceed with the assumption that the calculations are already public knowledge. For example, in PSUR 1, AstraZeneca compares expected-vs-actual GBS (Guillain Barre syndrome) cases.

As you can see, they use a background rate of 2.035 cases per 100K person years, and calculate that the expected number of cases over a 14 day risk window is 171.66, and the observed number of cases is significantly more than expected… and somehow still conclude that GBS is not a big concern1!

And this kind of fine-grained calculation is not provided in the future reports.

For example, in the PSUR for 2022, they just note that the GBS case was already analyzed and concluded that they did not find any causal association.

As you can see, they use every single trick in the book to avoid blaming GBS on their vaccine - unreliable voluntary reporting, limited information, conservative estimates of background rates, COVID-induced GBS.

What is background incidence rate?

You can see that they refer to a background incidence rate (IR) of 2.035.

Here is how you can think about it: if you observe 100,000 people for a year, you are likely to see 2.035 cases of GBS.

Cross-verification

At a rate of 2.035 per 100K people, and for the US population of 330 million, you might expect to see 2.035 x 10 x 330 = 6715 cases of GBS diagnosed in the US each year. It turns out to be in the right ballpark.

Calculating the expected value for v-safe for a specific risk window

v-safe had 10 million participants and tracked the participants for 12 months each.

Of these, 5.24 million had the Pfizer vaccine.

Since some participants had multiple vaccines, I created a “pfizer-only” dataset of participants who only took the Pfizer vaccine.

There are almost exactly 5 million such participants.

At the background incidence rate of 2.035 per 100K, you would expect to see 2.035 x 10 x 5 = 101.75 cases of GBS among the Pfizer-only v-safe participants over 365 days.

(2.035 per 100K = 20.35 per 1 million = 5 x 20.35 = 101.75 per 5 million)

Reducing the risk window to 7 days

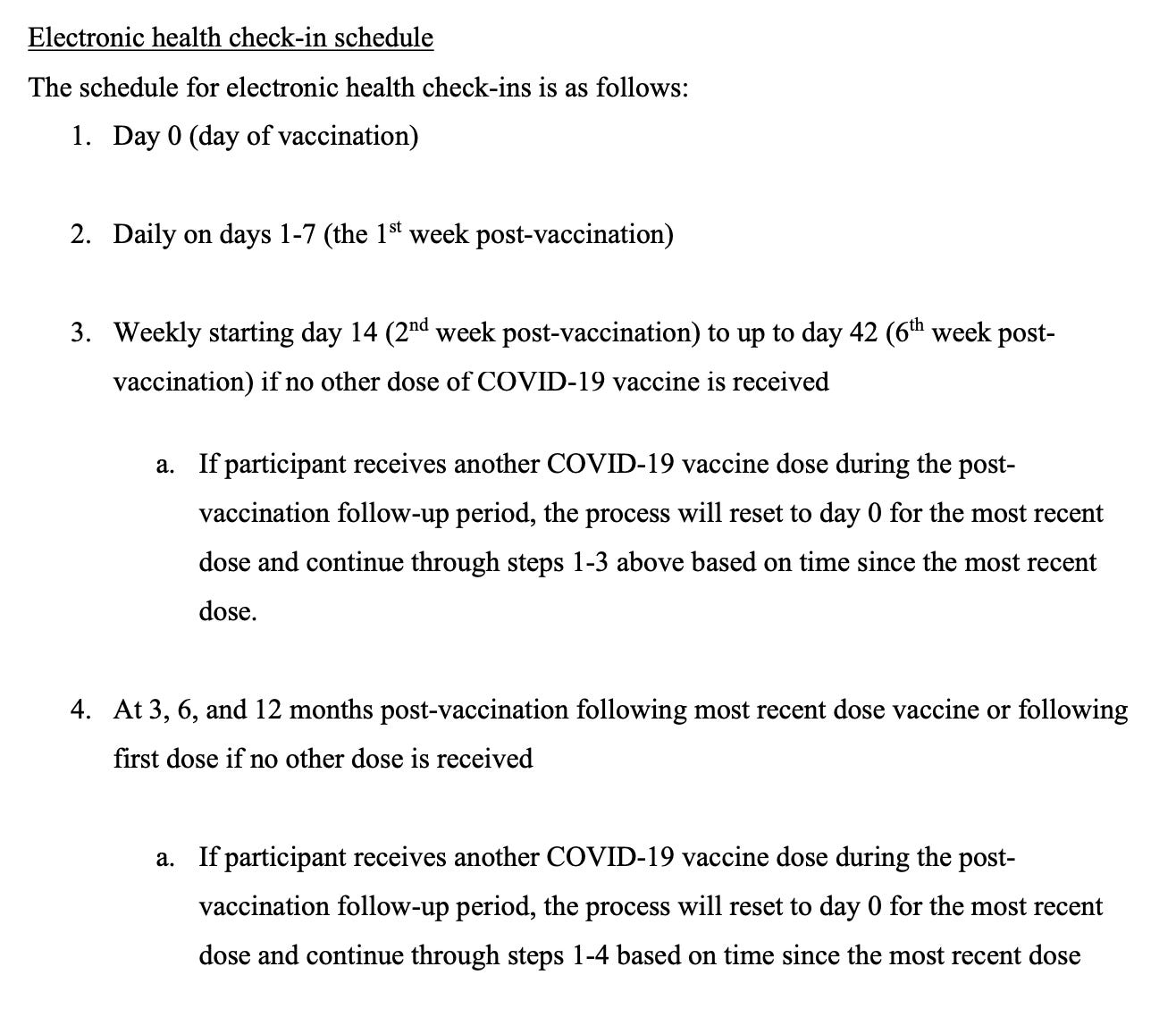

On my previous article, Liz Willner from OpenVAERS asked why no row in the Interim Release 1 CSV file had both SYSTEMIC_REACTION_OTHER (SRO) and the SYMPTOMS_DESCRIPTION (SD) field filled out.

As I mentioned in my answer, the free text for the SRO is only shown during the first week, and the free text field for SD is only shown after the first week.

You can verify this by looking at the screenshots for all the different checkins in the sample v-safe data provided by Aaron Siri. The red box highlights the entries for the first week of checkins, when the free text allows the participant to fill out the SYSTEMIC_REACTION_OTHER field.

Everything above the red box shows all the checkins where the SYMPTOMS_DESCRIPTION field is shown to the user in the v-safe app.

This means all the entries in the covid_meddra_sro CSV file correspond to within a week of vaccination, and all the entries in the covid_meddra_sd CSV file correspond to after a week.In other words, every entry in the covid_meddra_sro file is for a symptom which shows up within a week of vaccination.

Based on our above calculations, for a population of 5 million (Pfizer only), we expect to see 7 * (101.75/365) = 1.95 cases of GBS in the covid_meddra_sro file.

Let us see what our query tells us

So we got 162 Pfizer-only participants who filled out a free text entry which was coded as GBS in the covid_meddra_sro file. This is much larger than the 2 cases we expected.

Does this mean we can declare the COVID19 vaccines as unsafe?

Not yet.

Let us first see what the actual free text entries say.

The first thing you notice is that even though there are 16 unique registrants with GBS symptoms, there are only 4 unique registrants whose information is available in Interim Release 1.

Let us now consider all the free text entries submitted by each of these registrants.

(Remember, you can see the coding used for all the free text entries which have been submitted to date, because we do already have the full datasets for those).

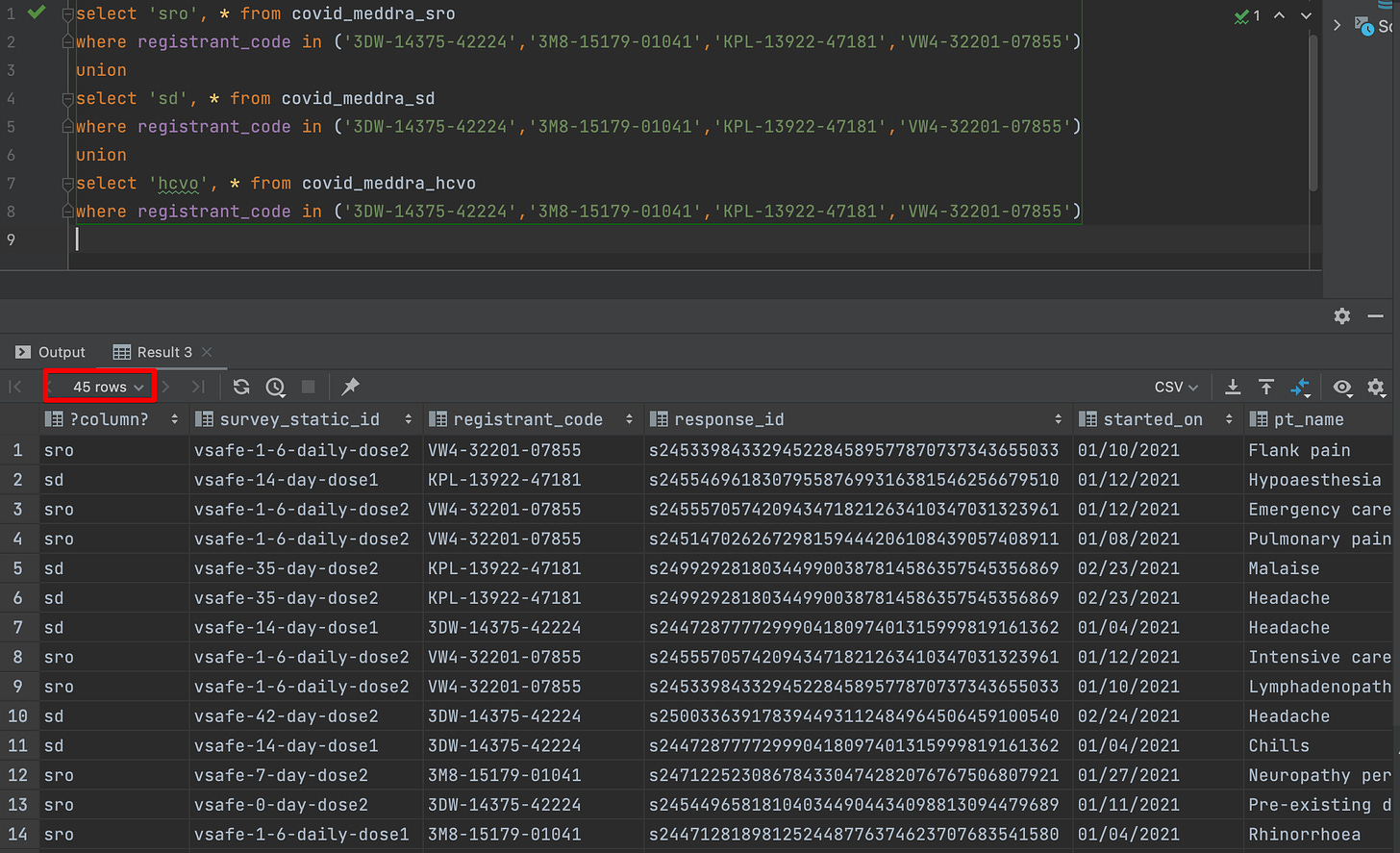

This is the query I used to construct the dataset:

Registrant 1 : 3DW-14375-42224

So this participant wrote this:

“Tingling up both legs to pelvis-required Benadryl was like guillian barre“

And here are all the free text entries:

Each row is not a unique free text entry, but each combination of survey_static_id and started_on will be a free text entry.

Notice that there are two SD free text entries - one on 01/04/2021 and the next one on 02/24/2021

But we only have one SD free text entry in the Interim Release 1 (IR1) CSV file.

On the other hand, we only have two SRO free text entries - one on 12/21/2020 and the other one on 01/11/2021. This means we got all the SRO entries in the IR1 batch.

Mapping SRO entry to survey date

Next we would like to map the SRO entry to the survey date.

For this registrant, it is not too hard to infer this by reading the two SRO text entries (and especially once you consider what is missing between the free text entries)

“Tingly leg- felt asleep. opposite leg of arm injection“ => 12/21/2020

“Tingling up both legs to pelvis-required Benadryl was like guillian barre“ => 01/11/2021

Registrant 2 : 3M8-15179-01041

Registrant 2 has exactly one entry in IR1.

And has 9 different covid_meddra_SRO entries over 4 different survey dates (I have added red highlighting to make it easy to distinguish between the dates).

It is pretty clear that the lone free text entry corresponds to the 01/04/2021 survey.

Notice that GBS only makes an appearance on 01/27/2021, which is Day 7 after the second dose (based on the survey_static_id).

In other words, our criteria included this entry even though they only had symptom onset more than 6 days3 after Dose 1.

Registrant 3 : KPL-13922-47181

Registrant 3 has three IR entries.

And they have 2 SRO entries and 2 SD free text survey entries (once again I have added the red highlight to distinguish between them)

Once again, it is easy to map the SRO entries to the survey dates by reading them.

“Had had bilateral sciatica last night for several hours“ => 12/30/2020

“Numb toes 2 days in a row. I developed Guilin Barre 2 weeks after a flu shot 5 years ago, and haven't had a flu shot since. It started with my lower legs.“ => 01/03/2021

And we can also map the SD entry which has been published based on how different it is from the other list of symptoms.

“Pain and numbness in the vaccination arm.“ => 01/12/2021

In this case, we can probably rule out GBS as an adverse event which occurred after vaccination.

Registrant 4: VW4-32201-07855

These are the list of IR entries for registrant 4:

And these are the corresponding coding survey entries:

This registrant stopped filling out vsafe 7 days after Dose 2, but does have an entry in the vsafe call center logs, and it looks like they filed a VAERS report.

What we can infer from these 4 examples

We can infer the following based on the 4 examples:

a) the presence of the pt_name field does not automatically imply that the participant had that adverse reaction after the vaccine

b) in turn, this means we cannot just use the raw numbers to calculate if the observed number of cases was greater than the expected number of cases

c) for now, it looks like the CDC used some software for doing the coding (in Oct 2022). And if it was manual, then they did a very sloppy job.

d) the data is too incomplete to make any inferences, and it is possible we may have to wait till all the data is published before figuring out the full extent of vaccine injuries reported in v-safe

In the case of GBS, the information we have till date tells us that unless all the 12 other registrants were somehow incorrectly coded as GBS, v-safe should have marked it as an adverse reaction caused by the vaccine.

What is a good risk window for vaccine safety studies?

I reduced the risk window from 14 (which is used in the PSUR and many other vaccine safety studies) to 7 based on the structure of the v-safe data collection process.

In fact, I mentioned this in one of my previous articles (emphasis mine):

One, the farther away an event from the date of vaccination, the less likely it is to be due to the vaccine. This means the farther you push out this window, the more easier it is to get your numbers to fall within the background rate.

Two, for an analysis like this to be internally consistent, they should be able to produce the same results even if they choose smaller time windows, such as 7 days and 14 days. The “pull forward effect” suggests that the background rate test will fail if they do this (but I would love to be proven wrong - is the New Zealand government willing to publish these numbers?)

Three, the total number of deaths for the age-wise cohorts do not have…

And the AstraZeneca PSUR provided me some additional data to be able to do this calculation.

Using a 7 day window seems to show that more cases of GBS were identified than was expected. Is that fair to shrink the risk window like this? Can it become too small?

I don’t know, so I asked ChatGPT :-)

As you can see, the downsides it mentions are not likely to affect reasonably large datasets like v-safe.

But here is a much more interesting thing: the Pharma companies seem to want to lengthen the risk window to make the vaccine look safer.

That is what AstraZeneca did for GBS:

Notice that Observed and Expected values seem to converge around day 42.

And of course, this makes absolutely no sense!

First of all, it does not make any sense to give the same weight to events happening closer to vaccination and events happening further away from it, as that would invert the entire premise of the Bradford Hill criteria!

Second, the vaccine pushers also dismiss VAERS death reports clustered around the date of vaccination saying “someone is much more likely to report a death within one day of vaccination to VAERS than they would later on”.

Well, if that is true, then clearly it makes no sense at all to keep lengthening risk windows for vaccine safety studies. You cannot have it both ways, and then go on to claim that these are the most well studied vaccines in history!

Third, what is a good maximum value for the risk window? Why not expand it to 365 days and make the vaccine look much, much safer?

Now let us take a look at why CDC might have avoided text mining v-safe free text entries.

Why CDC avoided text mining v-safe free text entries

The CDC could reasonably claim that they did not anticipate so many reports and were backlogged, and hence they could not allocate the resources required for manual coding for these free text entries.

But why didn’t they do some kind of automated analysis4?

Remember, the CDC did not actually do any text mining for any of the unsolicited symptoms.

CDC's v-safe text mining is laughable

And they cannot make an excuse such as “Well, there are so many possible adverse events. How can we mine the free text for all of them?”

First of all, that reveals such a poor understanding of how far the fields of Natural Language Processing and Machine Learning have progressed over the last 10 years or so.

But even if the best that the CDC could come up with is to use string regular expressions (which is at least 10 years behind the state-of-the-art), they still had a list of about 15 AESIs which they even marked in their own v-safe protocol!

So why didn’t they use string regex for mapping these free text entries to these AESIs?

In fact, most medical terms are not even common English words, so there is very little chance of ambiguity. Something as simple as a direct keyword search would have helped them identify many of these adverse events.

Why didn’t the CDC use even rudimentary text search to find these AESIs?

I think it had to do with the fact that they could not have published anything substantial without providing some sample free text entries, and people would have immediately noticed that there is almost no personally identifying information in them!

Take a look at these free text entries in the one paper that the CDC did publish:

In fact, it doesn’t even make much sense when you think about it, because all the PII was already collected in the v-safe app during registration:

And the maximum number of characters allowed for free text entries was only 250!

If anything, people were trying to abbreviate a lot of words so they could fit everything within the space provided.

Why in the world would anyone want to add5 their PII into the textbox?

We can do the same calculations for other AESIs

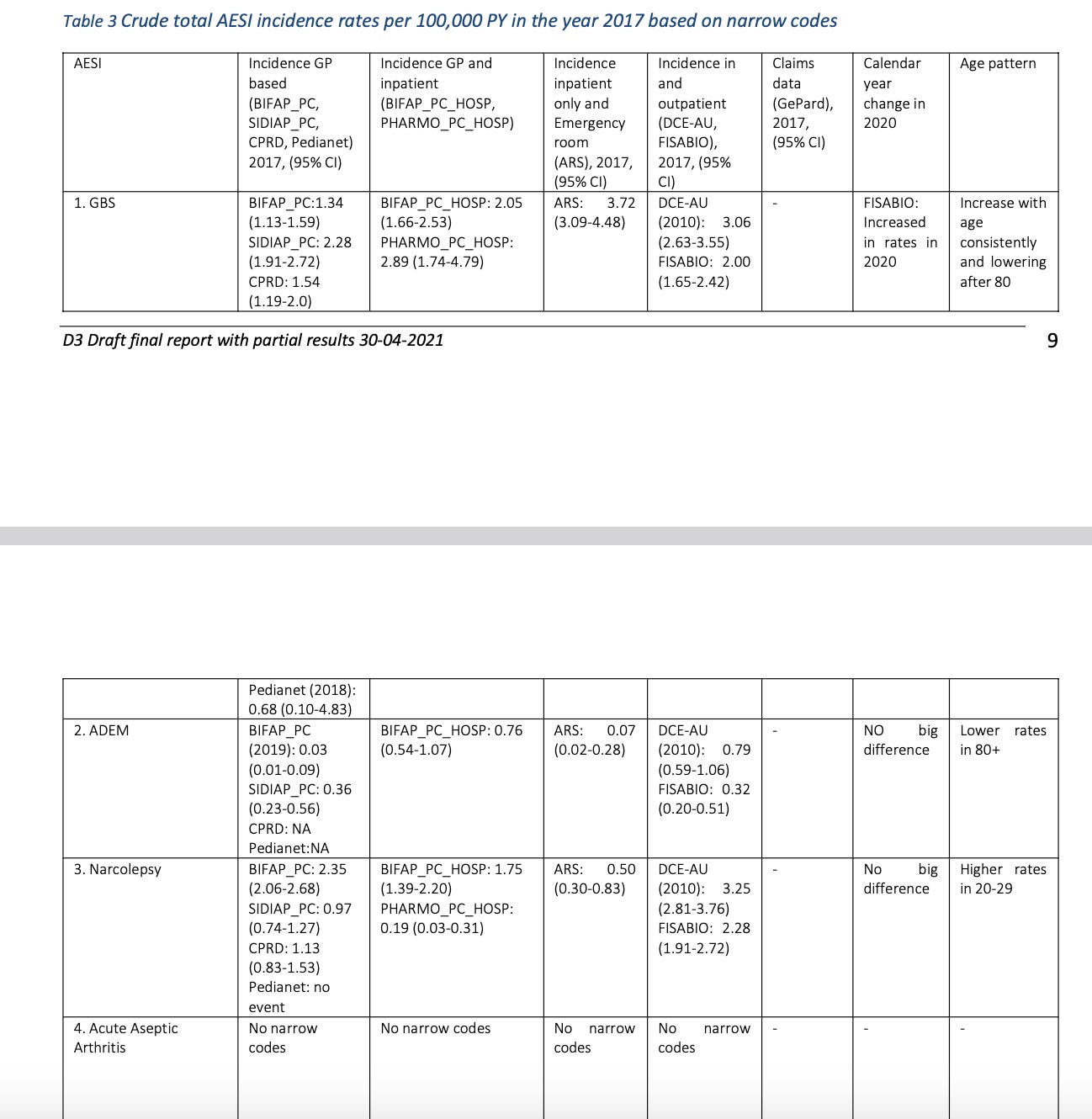

In their analysis, AstraZeneca mention that they based their calculations on the following document (you can see this link mentioned in the top image which shows the Observed vs Expected cases for GBS)

“Background rates of Adverse Events of Special Interest for monitoring COVID-19 vaccines“

This paper calculated the background rates for most of the important AESIs related to the COVID19 vaccines.

As you can see, this is considered the “official”6 source for the background AESI rates.

And the paper seems to be quite thorough, as it derives its numbers from multiple different databases.

This means it is now possible for v-safe analysts to use that background incidence rate data to calculate expected-vs-observed rates for the other AESIs in v-safe.

After all, we are using an “official” source for our calculations!

If you read the rest of the report, they do it by using Brighton Collaboration levels and selecting only those cases which are at a highest levels of diagnosis to reduce the number of observed cases

This number is even worse for Moderna - there are 26 such cases

At the moment, it is not clear to me whether such an entry should make the cut for symptom onset “within a week”. I am open to suggestions.

Even if you think automating this can lead to errors, there was nothing preventing the CDC from flagging the entries which had any of the words from the adverse events of special interest (AESI) which were omitted from their list of solicited symptoms.

Then a human could have manually reviewed these entries. There were only a handful of such entries for GBS, and probably not more than a few hundred per AESI.

Some people would probably say that some information is not directly PII, but can become identifying if a condition is extremely rare etc. I suppose that it true, but the CDC could have simply omitted such entries while writing their publications.

While it is possible a lot of people have referred to that document in their analysis before, it does matter that we now have confirmation that it is indeed the official source.

if you need more "expected" data, see these twitter threads for the post-authorization studies of pfizer/biontech, moderna and AZ. all the files are in the first link, the threads are just my usual style of skim-reading, but they might be helpful in getting through the material quicker.

pfizer: https://twitter.com/a_nineties/status/1750875050834575756

moderna: https://twitter.com/a_nineties/status/1752943956072030613

AZ: https://twitter.com/a_nineties/status/1754584313125900645

i linked them because it's extremely satisfying to see you do the actual number work i know is there but lack the skills to do. if you want more PSURs, this link needs to go in your bookmarks: https://tkp.at/2024/01/17/psur-datenarchiv-zu-comirnaty-vaxzevria-nuvaxovid-und-spikevax-neue-updates-zu-spikevax-und-vaxzevria/

great work, eager to see you expand on this!

thank you for this very helpful post